The Uniform Mediocrity of Design

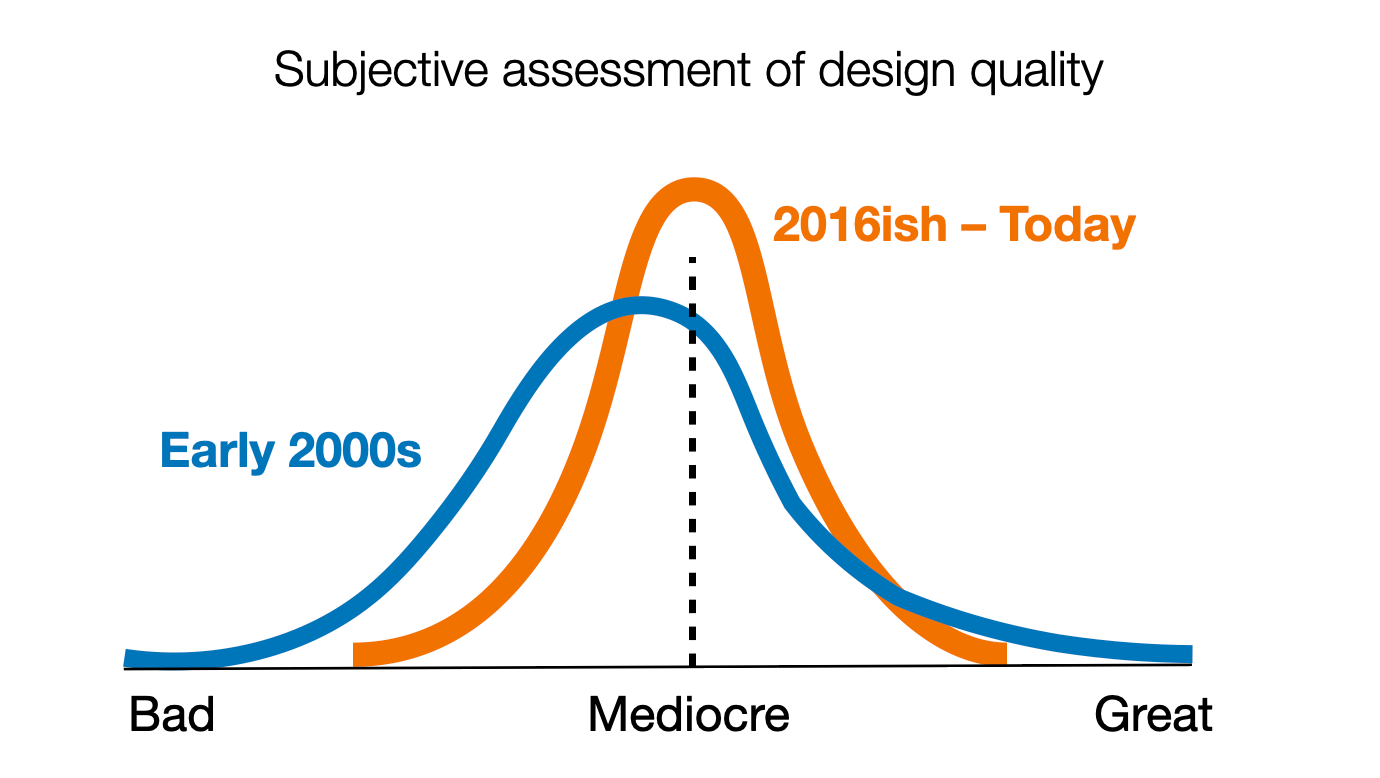

The original thesis of this piece was my frustration that the current state of design has led to a uniform mediocrity of output. That while the 'floor had been raised' over the past 20 or so years, such that there are fewer outright bad digital user experiences, it feels to me like the ceiling has lowered, so that there are no longer the kind of transcendent, or, at least, compellingly creative user experiences such as early Flickr or Etsy, how Slack felt when it first arrived on the scene, weirdo apps like Path, or even something as basic yet transformative such as Writely (which became Google Docs) that shifted how we thought of collaborative tooling.

There are many reasons for this compression in quality, including the standardization and even professionalization of practice and the adoption of frameworks and tools (design systems) that ensure interface consistency. Lenny Laurier goes into deeper detail about these specifics in his essay "Good enough, isn't" (though I think he's too generous in his quality assessment).

Mediocrity is a symptom of a deeper rot in UX/Design

UX/Design teams are associated with the quality of the work they produce. The implication of this trend is that UX/Design is, on the whole, associated with mediocrity. The furor within the design community about AI tooling, culminating in the launch of Claude Design last Friday, is an indicator of this trend. If what this product does or produces is what most people think "design" is, we've already lost.

And what I only recently realized is that UX/Design lost about a decade ago, a condition that had been masked by what appeared to be evident success. A little historic context will help me explain.

Mid-2000's: The Rise of Design

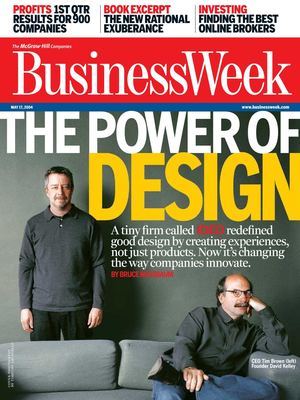

The mid-2000's saw an elevation of Design in the business imagination. IDEO made the cover of BusinessWeek under the banner "The Power of Design."

Apple launched iPhone in 2007. Mint, a money tracking app, was acquired by Intuit for $170 million, and while that might seem small, the company had about 40 people, was only 2 years old, and its only competitive advantage was its user experience (it was built on top of another technology). Silicon Valley woke up to the business potential of design.

Along with IDEO, design firms (like Adaptive Path, which I cofounded) expanded. Books touting the promise and potential of design flourished. In 2008 Adaptive Path wrote Subject to Change. In 2009 Roger Martin, then dean of the Rotman School of Management at University of Toronto, wrote The Design of Business: Why Design Thinking is the Next Competitive Advantage.

In this time, there emerged a set of human-centered purposes and ideals that animated a wave of design ambition. It was reasonable to believe that companies would become increasingly design-centric, put people at the center of their decision making, drive innovation, and, in doing so, realize greater business success. Everyone wins!

A.G. Lafley, Procter and Gamble's CEO, made design a priority, insisting on human-centricity throughout the company. Health care providers like the Mayo Clinic and Kaiser Permanente established human-centered innovation labs.

Design had arrived.

2010s: Evident success masked a rotting core

In the 2010s, it appeared as if everything was coming up design. Design consultancies grew, and many got acquired for sizable sums.

In-house design organizations hyperscaled. Capital One's design team grew from about 40 to ~400 in about a 5 year span. IBM Design began their hiring explosion in 2012, going from 200 designers to thousands in 5 years. FAANG hired aggressively, boosting salary expectations (In 2015, I had to offer a new grad $150,000/yr or lose him to Facebook). A cohort of design executives was established, with VP (or greater) titles, budgetary authority, and other evident markers of success.

But... what appeared like success masked an unfortunate reality: as design grew, it also narrowed.

Much of this growth can be attributed to companies adopting agile practices for building software, and following models that suggested for every "product team" there should be a designer. And as these product teams bloomed, so did the need for designers.

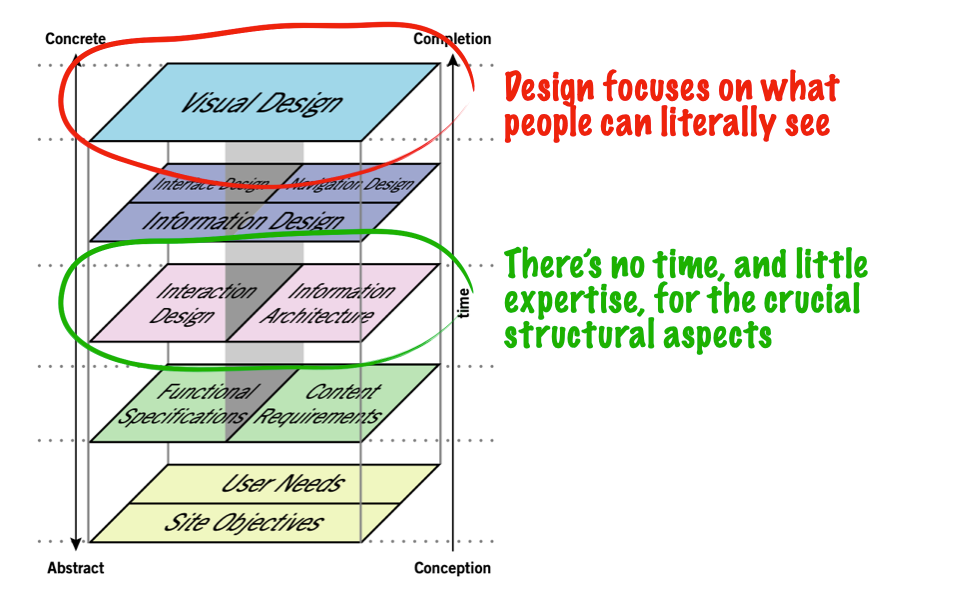

But these designers were often staffed as individuals (partnered with a product manager and some number of engineers), with a focus on the visual UI of the product—screens. The greatest sin a designer could commit was to keep engineers waiting, so they relied on default practices that produced good enough material quickly enough to keep the engineers busy. Concern for the true complexity of software was ignored, which meant deeper practices in interaction design and information architecture became marginalized.

Design had become production

What had gotten lost in the evident success of design is that the much of the work was production—the creation of assets to build software. This is why we needed so many designers—to create all these assets. This is why design systems became such a strong focus in the back half of the 2010's—to standardize the look and feel of these assets (so designers don't need to keep producing them over and over again). This is why you could have a UX bootcamp or certification course that took weeks or months instead of years—because it taught the very narrow set of skills necessary to find work.

Asset creation is important. Having a consistent set of user interface elements is important. But it's not design — it's production. It's the execution of a standard, not its creation. When I first worked at a design firm in the mid-'90s, I saw how traditional graphic design had two types of roles: the designer, who came up with creative solutions to the client's problems, and the production artist, who figured out how to execute those solutions. There's a parallel in architecture, where the architect is the lead conceptualizer and problem solver, and draftspeople address the nitty-gritty details and make sure the architect's vision is practicable.

In digital product design, we collapsed these two roles into one, because, well, we usually had only one designer available to do all the work (see agile teams, above). I first noticed this about 2012, when I went in-house and came across the concept of a "product designer." At that time, this was a professional who was responsible not only for the design of the digital product, but there was some expectation they could code it as well—which meant production. The animating principle was control—designers could ensure that implementation matched their designs. But the practical reality was one of commodification—what became valued internally was not the brilliant design, but the ability of someone to build it quickly and keep the engineers busy.

UX/Design accepted the role of crank turner, and abandoned the ambitious human-centered value proposition of the prior generation.

So, for about 10 to 15 years, the work that has been most associated with word 'design' is actually production. This is what others see coming out of the design team, and this is what design leaders have been tasked to manage. This is where the myth of "the design process" emerged, a set of steps you can just follow to do design.

And so we have a near-generation of designers and design leaders who have only ever operated in this constrained fashion, evaluated on their ability to produce. They have never seen design be truly strategic, set vision for a company, be tapped as a resource for unlocking new potential.

This doesn't look like success to me.

2023–present: paying the piper

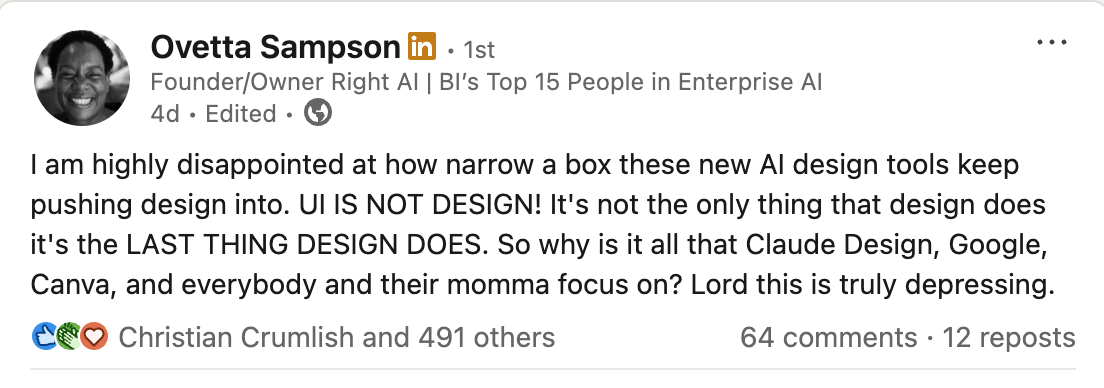

Starting with the layoff wave following pandemic-era overhiring, and now in the AI Moment with its concomitant discombobulation, the perceived threat to design is largely due to this constriction of the work to the production of screens. Ovetta Sampson's frustration is mine as well:

While it all feels sudden, the seeds for this were sown a decade ago. While it appeared that design and design leaders had never had it better in terms of pay and perceived influence, in fact many were overseeing a house of cards.

A crystal-clear indication of the current state of design was articulated by business and design consultant John Gleason — who came up at P&G during their design transformation — in a recent episode of Finding Our Way. He mentioned how, when folks in the C-suite are looking to solve core business problems, design is not even on the list of functions they turn to — they'll talk to marketing, sales, supply chain, product, finance. The foothold we thought we'd achieved in the 2000s, when CEOs actually did consider design to be a lever for substantive change, fell away during the intervening years as design retreated to a non-strategic function.

Move Left, Young Designer

The conventional response to all of this is that designers need to "move left" — get upstream, exercise judgment, be more strategic. Heck, this is my response to this. To recapture that mid-2000s positioning where Design was considered a competitive advantage.

But, we have to acknowledge that there exists a near-generation of designers and design leaders who don't know of any other world than this one. Telling them to 'be more strategic' is a bit like telling a fish to breathe air. They were trained into this narrow model, evaluated within it, promoted through it, with their success determined by their ability to execute, not to envision. (Yet Another Reason to rail against this fetishization of "craft"—it explicitly neglects the aspects of design work that actually produce the most value.)

When asked to imagine futures, all they can produce is the next increment, not because they lack intelligence, but because they were never shown another way. You cannot simply declare that design is now strategic when the profession spent over a decade systematically removing strategic capability from job descriptions, certificate programs, and hiring criteria.

Can we trust those who enabled this to get us out of it?

Which brings me to the people most responsible for this: design leaders themselves.

These were the people with titles, budgets, platforms, and proximity to power. And most of them (not all, but most) chose accommodation over advocacy. In pursuit of their seat at the table, they stopped fighting for anything that might threaten that seat. They managed upward carefully, demonstrated value through delivery velocity, and called it strategic influence.

Here's a challenge I'd put to anyone who disagrees: name a single prominent design leader who espouses an inspiring vision of a human-centered future and has demonstrably gone to the mat for it. Who has risked their position, their relationships, their credibility to push back against the reduction of design to production? LinkedIn is loaded with Design SVPs and CDOs espousing craft excellence and the importance of capitalizing on this AI moment—while neglecting to mention the values and principles of human-centricity and meaning that were foundational to design's initial rise.

Design didn't lose its strategic relevance because organizations took it away. When given the opportunity to lead strategically, Design leaders didn't know how, and simply reacted to their organizations' requests for more screens faster. Design leaders confused increased salary bands, elevated reporting structures, and the appearance of influence for actual organizational relevance.

To revisit Ovetta's comment, when I look at Claude Design, Google Stitch, Canva, products that define design as the production of prototypes, pitch decks, and marketing materials, I don't see AI pushing design into a narrow box. That narrow box (coffin?) is one we made a decade ago, and are now experiencing the ramifications.

![[TMA] How design succeeded its way into... irrelevance](https://storage.ghost.io/c/ca/c9/cac97c1b-e5da-46f3-8732-c6daebdd23b2/content/images/size/w2400/2026/04/optimizeli.001.jpeg)